Enterprise AI Governance: What Regulators Are Already Enforcing

A $2.5 million enforcement action in Massachusetts shows what happens when AI programs scale without an enterprise AI governance framework. Here is what the companies getting it right actually do.

Companies that deployed AI without enterprise AI governance frameworks lost an average of $4.4 million per incident in 2025.

Only 2% of companies meet full responsible AI standards, yet those companies suffer 39% lower financial losses when incidents occur.

Morgan Stanley, Kaiser, and Unilever each caught governance failures before regulators did.

What are the key elements of AI governance, and what happens when none of them exist?

In July 2025, a student loan applicant in Massachusetts submitted an application to Earnest Operations LLC. An algorithm decided her eligibility, her interest rate, and her loan terms. Sounds great on paper, except that no human reviewed whether that algorithm was fair and no one had tested it for discrimination. The system simply ran, creating a case study that asks:

Massachusetts Attorney General Andrea Joy Campbell's office investigated and found that Earnest's AI underwriting models produced disparate harm against Black, Hispanic, and non-citizen applicants. On July 10, 2025, the AG announced a $2.5 million settlement. The terms required annual fair lending testing, written AI governance protocols, internal controls, and ongoing reporting to the AG's office.

Notice that no AI-specific law was needed; existing consumer protection and fair lending statutes were sufficient and enforceable.

This is the central lesson for every executive deploying AI today. While most enterprises treat AI governance as a compliance layer they add after deployment, that approach guarantees the exact regulatory exposure they're trying to avoid. Organizations that embed governance directly into their existing risk, legal, and operational frameworks before AI goes live move faster, scale safer, and present a defensible posture when regulators come knocking.

What Are the Key Elements of an AI Governance Framework?

An enterprise AI governance framework is the set of policies, processes, roles, and controls that determine how AI systems are developed, deployed, monitored, and corrected. It answers four operational questions:

- Who is accountable when AI causes harm?

- How are models tested for bias before and after launch?

- What triggers a human review of an automated decision?

- How does the organization detect model drift once a system is live?

Without answers to these questions, an AI program is an open vulnerability for an organization.

An AI governance framework template is applied across three dimensions: people, process, and technology. Integrating these dimensions is critical to an organization’s financial well-being. According to EY's Responsible AI Pulse survey, 99% of organizations reported financial losses from AI-related risks in the past year. Nearly two-thirds suffered losses exceeding $1 million, and the average loss per company was $4.4 million.

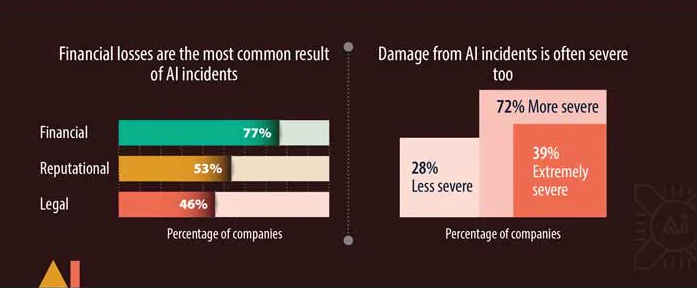

The fallout from AI incidents are increasingly well-documented. The Infosys Knowledge Institute's August 2025 report found that 95% of C-suite leaders reported AI incidents in the past two years, with 39% describing the damage as extremely severe. The most common result of AI incidents? Financial losses (77%) closely followed by reputational and legal setbacks. And while only 2% of companies meet full responsible AI control standards, those that do experience 39% lower financial losses and 18% less severe damage when incidents occur.

Regulatory pressure is adding urgency. The EU AI Act enters full enforcement in August 2026, with fines up to €35 million or 7% of global annual turnover for violations involving high-risk AI systems. In the U.S., 28 states had enacted targeted AI laws by mid-2025. The Earnest enforcement case study shows that regulators do not need new laws, they simply need evidence that your AI caused harm.

Next, discover how Morgan Stanley, Kaiser Permanente, and Unilever each faced a version of this challenge. What they built in response, and what it produced, is worth examining for any organization.

Why Do Most AI Governance Programs Fail?

There are three main signals that indicate an AI governance program is failing.

Policy Theater

Most companies have an AI ethics policy. Few have a mechanism to enforce it. They appoint a responsible AI officer, publish a set of principles, and treat the resulting document as governance.

It is not. A March 2025 peer-reviewed paper in Springer Nature's Digital Society reviewed nearly 100 such codes adopted globally and found their real-world effects to be "modest"—a phenomenon scholars call "ethics washing." Earnest Operations illustrates exactly what ethics washing looks like under enforcement: the company may have had written AI policies, but no operational mechanism existed to test whether its models were producing discriminatory outcomes. The policy and the production system were never connected.

Governance Designed for Yesterday's AI

A one-time model review at deployment is not sufficient for AI systems that learn and drift continuously. McKinsey's June 2025 agentic AI report found that AI teams have "operated in silos, developing AI models independently from core IT, data, or business functions." Static governance frameworks cannot catch bias that appears six months after launch. Alert fatigue, unchecked automation, and inadequate escalation pathways create a gap between what governance says and what happens at the point of decision.

Governance That Stops at Deployment

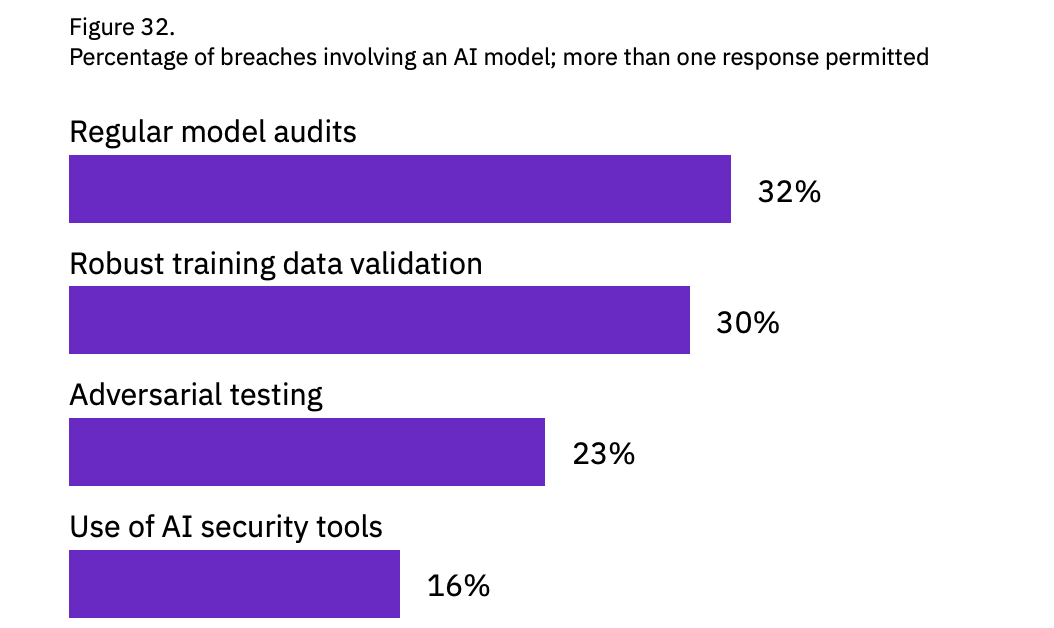

Most enforcement risk sits not at launch, but in production. Models drift. Conditions change. Discriminatory outcomes can emerge months after a one-time review cleared a project. The IBM Cost of Data Breach Report 2025 found that 63% of organizations lack AI governance initiatives, and that shadow AI adds $670,000 to the average cost of a data breach. In the same report, IBM found that close to two-thirds of breached organizations weren’t performing regular audits targeted at risk detection.

It seems that this concern is particularly relevant for US-based organizations, where the average cost per data breach reached an all-time high of $10.22M in 2025. Governance with no post-deployment monitoring cannot catch the problem that regulators will eventually find.

An image shows two 3D bars, one representing the global average cost per data breach ($4.44M) and the second representing the US average of $10.22M per data breach, underscoring that AI governance frameworks must be used more widely.

Morgan Stanley: Who’s Responsible for What AI Says?

The answer at Morgan Stanley is unambiguous: the humans press the button. That is not a metaphor; it’s the governance philosophy of Jeff McMillan, the firm's Head of Firmwide AI, and it is baked into every tool the firm deploys.

Morgan Stanley manages over 16,000 financial advisors, subject to continuous regulatory scrutiny. Every client interaction carries compliance risk. The firm launched AI @ Morgan Stanley Assistant in September 2023, a GPT-4-powered tool that gives advisors natural-language access to more than 100,000 proprietary research documents. It followed with AI @ Morgan Stanley Debrief, which generates meeting notes, surfaces action items, and drafts client follow-up emails with client consent.

The results are striking. 98% of Financial Advisor teams adopted the Assistant. Document retrieval efficiency improved from 20% to 80%, according to OpenAI's case study on the deployment.

What enabled this scale was the AI governance oversight structure. Before any use case goes live, Morgan Stanley subjects it to an evaluation framework that tests model performance against real-world scenarios, with human expert graders scoring outputs at every step:

- Daily regression testing catches performance degradation before it reaches advisors.

- Advisors review and adjust all AI-generated outputs before finalizing them.

- No material decision is fully automated.

Morgan Stanley also appointed a dedicated Head of Firmwide AI reporting directly to co-presidents, making AI governance oversight a board-level function. Rather than assigning governance to compliance and waiting for a policy, Morgan Stanley’s evaluation framework serves as engineering infrastructure. Governance is how the product is built.

"This technology makes you as smart as the smartest person in the organization. Each client is different, and AI helps us cater to each client's unique needs."

— Jeff McMillan, Head of Firmwide AI, Morgan Stanley

Kaiser Permanente: When the Algorithm Flags a Patient, Who Decides What Happens Next?

At Kaiser Permanente, a nurse answers that is the result of deliberate governance in healthcare AI design rather than default practice.

Kaiser needed a system to monitor thousands of patients simultaneously for deterioration, around the clock, across 21 Northern California hospitals. The risk was not only clinical. Sending alerts directly to frontline clinicians without filtering would create unmanageable volume. Alert fatigue is itself a patient safety threat.

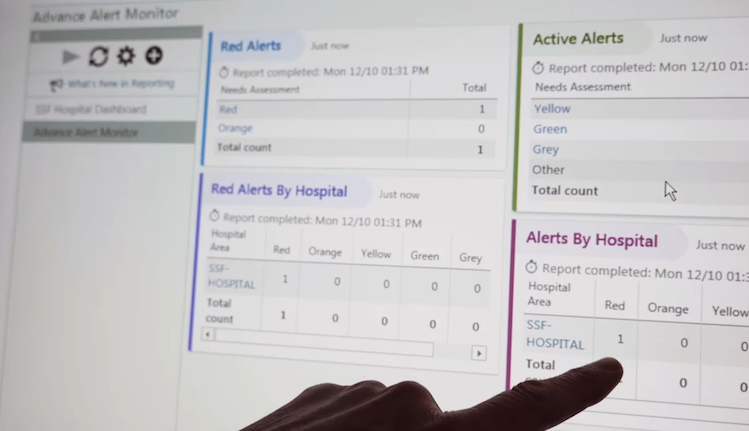

Kaiser built the Advance Alert Monitor (AAM) to analyze electronic health record data every hour, generating risk scores that give clinical staff up to 12 hours of advance warning. The peer-reviewed results, published in the Joint Commission Journal on Quality and Patient Safety (August 2022), are among the strongest in clinical AI: a mortality rate of 9.8% in the AAM group versus 14.4% in the control group, more than 500 deaths prevented annually, and a 10% reduction in high-risk 30-day readmissions.

The governance architecture is what makes those numbers possible. AAM alerts route exclusively to a dedicated team of Virtual Quality Nurse Consultants (VQNCs), who review every alert hourly before escalating to Rapid Response Teams. This eliminates alert fatigue at the point of care. Standardized clinical protocols are embedded into the escalation workflow, so every alert triggers a defined response. Frontline clinicians helped design the system before deployment, per Kaiser's own account of the program.

"It's important for our workforce to understand precisely how a new technology works and where it's going to fit into their workflow, and for them to guide us."

— Dr. Vincent Liu, Senior Research Scientist, Kaiser Permanente

The VQNC team is not an add-on. It is the governance layer — a new professional role whose entire function is to be the human checkpoint between the algorithm and the clinical decision.

How Does Unilever Keep 500 Global AI Systems Accountable?

With a traffic light.

The answer sounds simple, but the mechanics behind it are not.

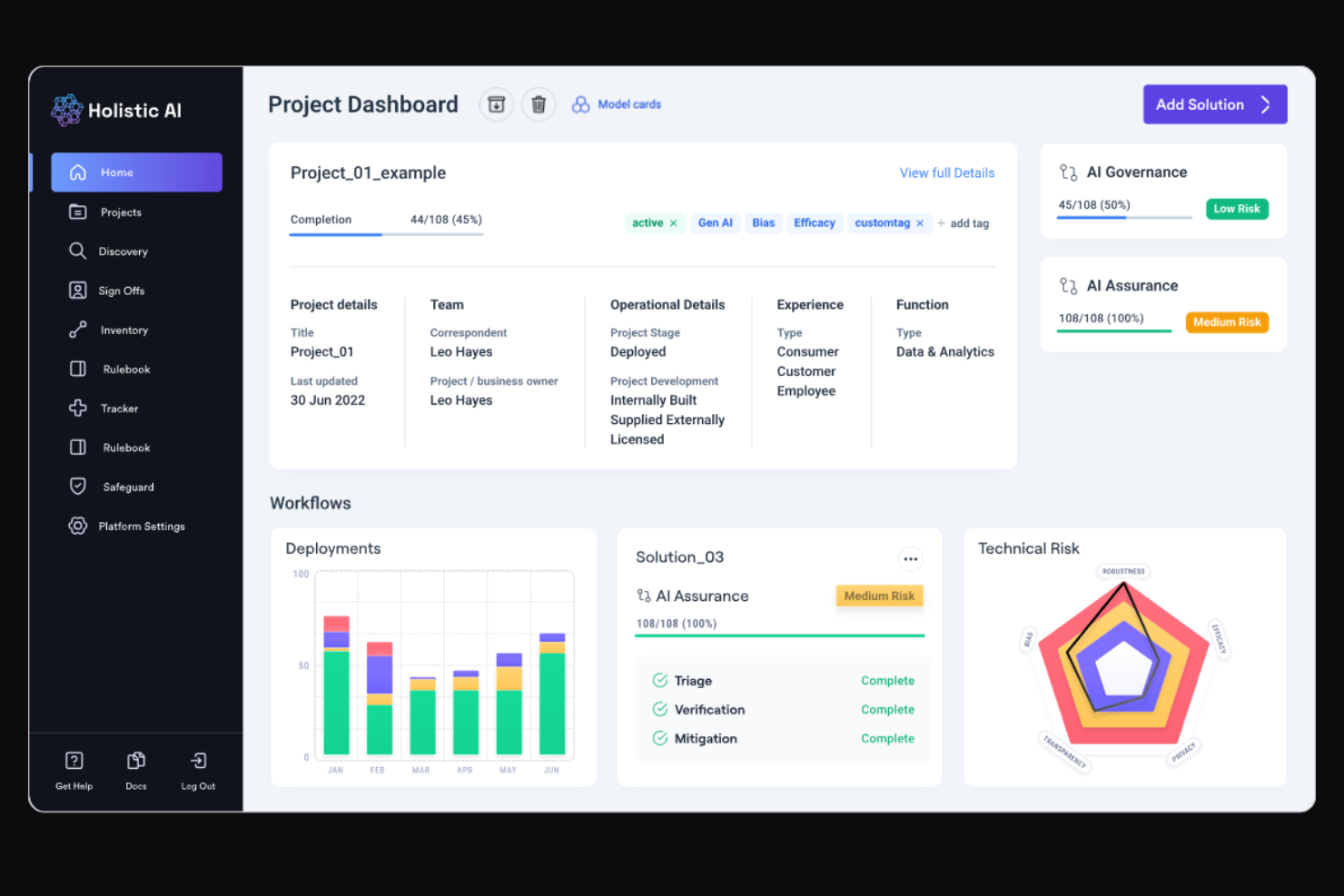

Unilever operates more than 500 AI systems globally across R&D, supply chain, manufacturing, and marketing. The challenge is not whether to review AI projects. It is how to do so consistently without killing deployment velocity. Unilever built a structured AI assurance platform with Holistic AI. Every project goes to a cross-functional review board, including legal, HR, and technology leadership, before approval.

The platform assigns a red, amber, or green rating at three stages: initial triage, detailed analysis, and post-mitigation review. Domains assessed include explainability, robustness, efficacy, bias, and privacy. A red rating blocks deployment. Any AI decision with significant individual impact requires human oversight in AI by design, not by exception. According to MIT Sloan Management Review's account of the program, a senior executive board makes all final deployment decisions.

Of the 150+ AI projects formally reviewed in late 2024, half required adjustments for bias, transparency, or performance before approval. That’s not a bad thing; it’s the system at work. Unilever trained 23,000 employees in the responsible use of AI by the end of 2024 and aligned its enterprise AI governance framework globally with EU AI Act risk tiers.

"As the deployments of these systems grow, we cannot underestimate the importance of making sure it works in a responsible manner."

— Andy Hill, Chief Data Officer, Unilever

Unilever's governance works because it has consequences. A red rating stops a project. That’s the difference between responsible AI and a policy document.

What Happens to Companies That Wait to Create AI Governance Frameworks?

Earnest Operations can answer that question. The company now has the governance structure it needed before deployment. A regulator mandated it … at a cost of $2.5 million plus remediation.

What each of the above case studies exemplifies is that the responsible use of AI is an operational design. An enterprise AI governance framework built on principles alone will not hold up under enforcement. Yet many organizations seem to consider that to be the case, as an EY survey found that only 12% of C-suite respondents could correctly identify the appropriate controls for five common AI risks.

For organizations wondering when to start implementing an AI governance framework, there’s no such thing as too early.

.avif)

.svg)

_0000_Layer-2.png)