The Cautious Rollout of AI in Banking Is About to Accelerate

Banking's cautious AI rollout stems from concerns about regulatory risks and accuracy. Success requires RAG systems, model-risk governance, and a focus on customer experience and operational use cases.

Commonwealth Bank of Australia’s failed AI voice bot rollout demonstrates why banks need careful governance before scaling generative AI systems.

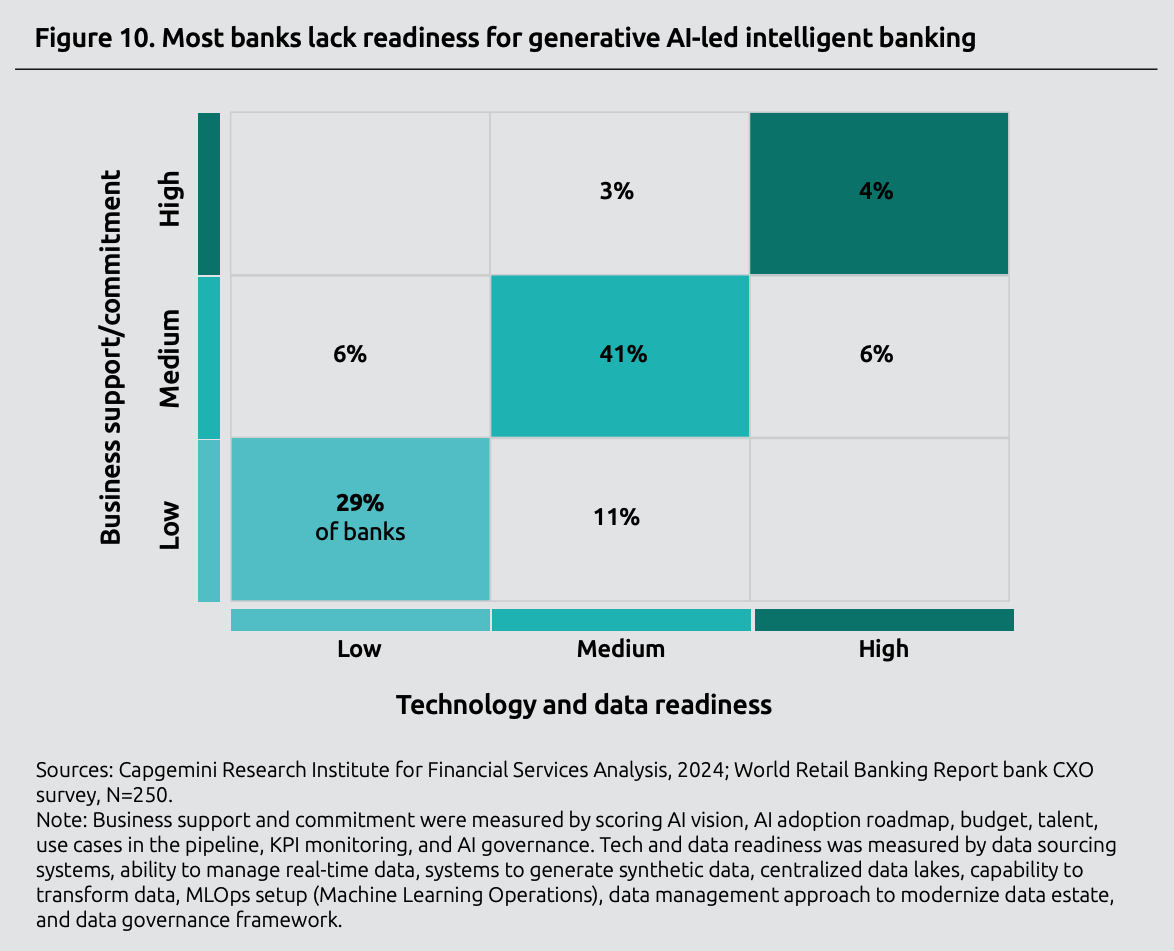

Only 4-6% of banks are ready to scale AI enterprise-wide, despite 80% of executives seeing promise in the technology.

Success requires RAG for accuracy, strong model-risk governance, and a pilot-to-scale approach focused on customer experience and operations.

In early 2025, the Commonwealth Bank of Australia (CBA) rolled out a bold experiment that would soon prove a cautionary tale for AI in banking. The bank introduced AI-powered voice bots designed to modernize its call centers, replacing 45 customer service roles with automated systems. The experiment aimed to reduce 2,000 calls per week while showcasing the bank's innovation.

Instead, within days, customers grew frustrated with confusing responses, call volumes spiked higher than before, and supervisors scrambled to cover gaps. The union mobilized, headlines screamed about AI failures, and CBA was forced into a humiliating reversal, hastening to reinstate all 45 displaced workers.

The lesson was crystal clear: deploying generative AI in banking without careful consideration is inadvisable. Unlike tech or retail firms—where a chatbot mistake is inconvenient but rarely catastrophic—banks operate in a tightly regulated, trust-driven environment. The stakes are higher and the room for error is smaller; it follows that its strategy must be more watertight as well.

Banking Needs AI Now More Than Ever

The urgency for AI adoption in banking has never been greater. Competitive pressure from neobanks and fintech companies is intensifying—Revolut expanded from the UK to the US market and reached $1 billion in revenue. At the same time, Chime now has over 8.6 million users (as of March 2025). These digital-native firms can deploy AI-powered features in weeks rather than months. Meanwhile, customer expectations have been shaped by Amazon's seamless recommendations and Netflix's personalization, creating demand for similarly intuitive banking experiences.

The post-pandemic digital acceleration compounds this pressure. By 2024, it's estimated that the total number of digital banking users worldwide will exceed 3.6 billion. Rising operational costs due to inflation and regulatory compliance are forcing banks to seek new efficiencies, making AI automation not only attractive but also essential for maintaining profitability.

Why AI in Banking Adoption Has Been Slower

Across most other industries, generative AI (GenAI) is scaling fast. Healthcare providers are using it to summarize patient records. Retailers are cutting customer response times by as much as 40%. Media companies are accelerating production cycles. But when it comes to the banking sector, AI is advancing more cautiously.

A 2024 Capgemini Report adds stark detail: while 80% of bank executives see promise in generative AI, only around 4–6% are prepared to scale it across the enterprise. AI is poised to chip away at banking's productivity challenges, but for banks, caution isn't inertia; it's a reflection of what's at stake. A hallucinated AI response that misstates a policy, misclassifies a transaction, or offers faulty compliance advice can trigger fines, regulatory scrutiny, or reputational harm.

What the Banking Industry Is Up Against

Regulatory Uncertainty and Compliance Risk

Generative AI is powerful precisely because it can synthesize information and produce language that feels human. But this strength is also its weakness: without proper guardrails, it invents facts or hallucinates. In sectors like entertainment, this is a nuisance; in banking, it's a liability.

The Financial Stability Board has warned that hallucinations, fraud, disinformation, and inadequate governance could pose systemic risks to financial services. These concerns extend beyond efficiency and touch on financial stability itself. A 2024 Bain & Company survey found that regulatory uncertainty was the primary cause of slow adoption among financial services firms, at 43%, with concerns about quality and accuracy close behind at 42%.

A WilmerHale report recommends that banks treat generative AI like any other high-risk model: frame projects as pilots, maintain human oversight, monitor performance in real-time, and keep regulators informed.

Organizational Readiness Gap

Even when accuracy issues are addressed, another barrier looms: readiness. Bain’s survey reveals that 63% of organizations either lack or are uncertain about having the proper data management practices for AI, thereby putting their projects at significant risk.

Unlike startups or retailers, banks cannot simply roll out an untested model and fix problems later. They are conservative by necessity, shaped by decades of regulation and risk culture. Many projects never make it past the proof-of-concept stage, not because the technology fails, but because internal readiness is lacking to support scaling.

Conservative Rollouts Show Promise

Still, banks are not standing. They are experimenting with generative AI—but in carefully controlled ways:

- At Bank of America, approximately $4 billion of a $13 billion technology budget has been allocated toward AI investments. Much of this has gone into internal productivity tools like "Erica for Employees," which helps staff navigate policies and respond to customer inquiries more efficiently. The focus is on enabling employees rather than exposing customers directly to generative models.

- BNY Mellon has introduced dozens of digital AI "employees," complete with logins and reporting structures. These digital workers are not advising clients or making trades—they are validating payments, assisting with coding, and handling back-office tasks. The narrative is clear: AI is welcomed, but within guardrails. According to BNY Chief Information Officer Leigh-Ann Russell, "This is the next level. I'm sure in six months' time it will become very, very prevalent."

- AI in banking is transforming Goldman Sachs in the form of a firmwide AI assistant. But like BNY Mellon and Bank of America, Goldman started internally. Employees use the assistant for document summarization and data analysis. Customers won't see it until the firm is confident in its reliability.

These case studies show the difference in philosophy. Generative AI in banking isn't rejected, but its entry point is cautious, internal, and deliberate.

"This is the next level. I'm sure in six months' time it will become very, very prevalent."

—Leigh-Ann Russell, Chief Information Officer, BNY Mellon

How AI in Banking Can Hit Its Stride

If AI in banking is to move beyond experiments and deliver real business value, the path forward requires a structured approach: mitigating risks, establishing governance, and focusing on areas of greatest potential.

1. Mitigate Accuracy Risks with RAG

Generative AI works by predicting the next word in a sequence. While this makes it flexible, it also means the model can produce confident but inaccurate responses. That's where Retrieval-Augmented Generation (RAG) comes in.

RAG can be understood as giving AI a reference library before it answers questions. In the context of banking, instead of relying on potentially outdated information from its training, the AI first searches through a bank's current, approved documents—such as the latest fee schedules, compliance procedures, or account policies—and then crafts its response using only that verified information. It's like having an employee who always checks the official manual before giving customers an answer, ensuring responses are both accurate and compliant with an institution's current rules.

For example, when a customer asks about international transfer fees, RAG ensures the response comes directly from the bank's current fee schedule, not outdated training data that might hallucinate incorrect rates. A white paper by Kodex AI (in partnership with Deutsche Bank) recommends RAG as a way to reduce errors and maintain control over outputs.

2. Implement Strong Model-Risk Governance

Banks already have frameworks for model-risk management in credit scoring and fraud detection. The same rigor must apply to generative AI.

Recommended steps include establishing accuracy thresholds (e.g. 95% minimum for customer-facing applications), launching projects as pilots with defined exit strategies and specific timeframes, keeping humans in the loop for sensitive decisions, monitoring outputs continuously for accuracy and compliance, and reporting transparently to regulators about methodology and safeguards. Risk management guidance from EY specifically recommends that oversight of AI/ML models should be consistent with traditional model processes, with clear roles and responsibilities for developers, users, and validators to achieve accountability for risks.

This is a prerequisite for trust. Clients and regulators alike need to know that AI in banking meets the same standards as any other critical system.

3. Adopt a Pilot-to-Scale Playbook

Rather than chasing enterprise-wide deployments on day one, best practices suggest banks can benefit from a phased approach with clear timeframes. Industry best practices recommend that pilot phases typically span 3 to 6 months, with organizations focusing on use cases that can demonstrate tangible results within 2-3 months to build momentum and stakeholder support. The process begins with pilots in low-risk areas, such as internal knowledge retrieval, agent assistance, or document summarization. Research shows that financial institutions using centrally led AI operating models are most successful in moving use cases past the pilot stage, while more dispersed approaches struggle with scaling. Next comes proof, by tracking accuracy rates, employee productivity gains, and cost savings. Finally, banks can scale into customer-facing services once governance and reliability are demonstrated.

This playbook reduces exposure while still unlocking incremental value.

4. Focus on Customer Experience and Operations Success Stories

Customer experience and operations are the most promising areas for generative AI in banking, with proven success stories demonstrating measurable ROI.

Customer experience and operations represent the most promising areas for AI in banking, where careful implementation delivers measurable value while building trust.

On the customer side, PayPal was able to increase real-time fraud detection by 10%. Many banks’ AI chatbots handle routine inquiries with 24/7 consistency, eliminating wait times for basic services.

Operations offer even greater ROI potential. JPMorgan's COiN platform processes 12,000 commercial credit agreements annually, saving 360,000 hours of human work. What once required extensive manual legal review now happens in seconds with near-zero error rates. A regional bank saw developer productivity jump 40% using GenAI, with over 80% of its developers reporting improved coding experience.

Institutions succeeding with AI focus on solving tangible problems—reducing errors, cutting processing time, and eliminating manual work—rather than implementing AI for its own sake.

What It Will Take

The Commonwealth Bank's failed voice bot rollout serves as a cautionary tale, but it also reminds us of what happens when innovation outpaces governance and practical application. In the rush to modernize, CBA overlooked the need for accuracy, oversight, and a clear path to value.

Banks don't need to be first-movers to succeed with generative AI. They need to be thoughtful adopters. By grounding responses in trusted data through RAG, applying strong model-risk governance with clear metrics and timeframes, and scaling pilots carefully in customer experience and operations, financial institutions can realize genuine value while protecting trust.

Banks are careful adopters, and that’s a strong suit. With the right strategy, they can transform caution into a competitive advantage, building AI systems that are both innovative and trustworthy. The institutions that master this balance will lead the way into the future of financial services, proving that successfully adopting AI in banking stems from the qualities that banking has consistently been recognized for: transparency and trustworthiness.

.avif)

.svg)

_0000_Layer-2.png)