How to Fix AI Customer Service Agents Failing at the Handoff

Churn from just 2% of support ticket customers can result in six figures or more of lost revenue. Here’s how Bank of America, Oscar Health, and Verizon win with AI in customer service.

AI customer service agents escalating to a human agent often create frustration.

Forcing customers to repeat themselves drives 54% to give up on getting help.

Gartner predicts AI agents will address 80% of customer service issues by 2029.

Every AI customer service deployment has a moment of truth.

Thomas has called three times this week. Same problem each time: his order still hasn't arrived.

On his third call, he reaches an AI customer service agent, spends four minutes explaining the situation, provides his order number, confirms his address, and describes two failed resolution attempts. The AI escalates to a human representative.

The agent picks up. "Hi, how can I help you today?"

Thomas isn't thinking about his order anymore. He's furious.

In a typical B2B SaaS environment, deflecting 1,000 support tickets saves roughly $10,000 in operational costs. But if just 2% of those customers churn at an average annual contract value of $5,000, the revenue lost is $100,000.

This isn't an edge case. 74% of consumers find it very frustrating to repeat themselves across interactions, according to Zendesk's 2026 CX research. And the business consequences of that frustration can be devastating: 54% give up when forced to repeat their issue multiple times, and 29% stop buying due to poor customer experience. Forcing a customer to start over signals that their time doesn't matter, that they're a ticket number.

In a typical B2B SaaS environment, deflecting 1,000 support tickets saves roughly $10,000 in operational costs. But if just 2% of those customers churn at an average annual contract value of $5,000, the revenue lost is $100,000. This happens all too frequently because support budgets are siloed from revenue retention metrics. Companies optimize for cost per ticket while destroying customer lifetime value.

Enterprises deploying customer service AI chatbots often measure the wrong metrics without the right architecture in place. Here, we’ll show how Bank of America, Oscar Health, and Verizon included context transfer, sentiment-triggered routing, and escalation feedback loops from the start.

Why Do AI Customer Service Agents Fail at the Handoff?

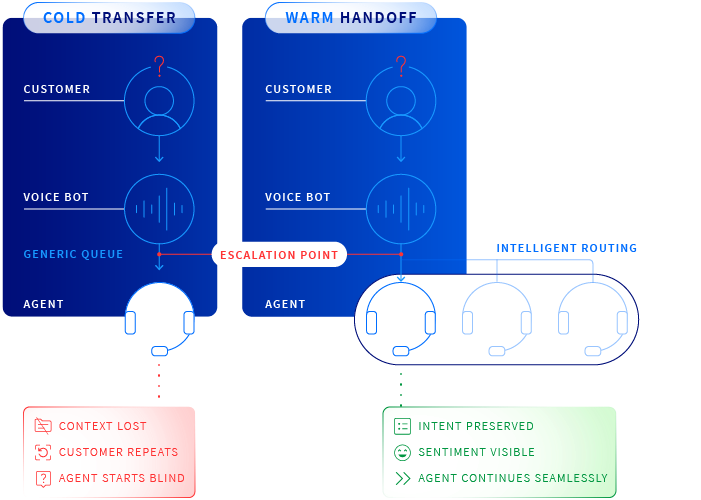

The conversation about AI in contact centers fixates on automation. What percentage of inquiries can the bot handle? How much faster can it resolve routine questions? How many agent hours does it save? These metrics matter, but they don't capture what happens when AI hands a customer off to a human agent. Contact center experts Bucher + Suter explain it as a warm transfer versus a cold handoff.

When enterprises miss the escalation layer, the consequences become public. Klarna's AI assistant handled the equivalent work of 800 full-time employees and cut resolution time from 11 minutes to 2. What followed in 2025 was quieter but more instructive: the company had over-weighted cost savings at the expense of quality. Klarna's fix centered on escalation, adding AI-generated handoff summaries so agents received full context, and introducing confidence scoring so the system escalated rather than guessed when it wasn't certain. The AI ended up handling more interactions than before, not fewer, because the handoff was finally trustworthy.

Three failure modes show up repeatedly.

- Amnesia: An agent picks up with no record of the conversation. One in three agents lacks the customer context needed to deliver an ideal experience.

- Lack of Sentiment-Aware Routing. Not every escalation is the same. When customer service AI agents escalate without detecting or communicating emotional state, human agents inherit a problem they can't see coming.

- The Feedback Void. Most enterprises track containment or deflection, but few track what happens to escalated conversations: whether the agent resolved the issue, how long it took, whether the customer repeated themselves. Without that loop, the AI learns nothing from its failures, and the escalation layer stays broken.

"Behind the scenes, every handoff should be tagged and tracked, feeding an algorithm that learns when and why customers escalate."

—Julie Geller, Info-Tech Research Group.

How Does Bank of America Ensure Full Context Transfer?

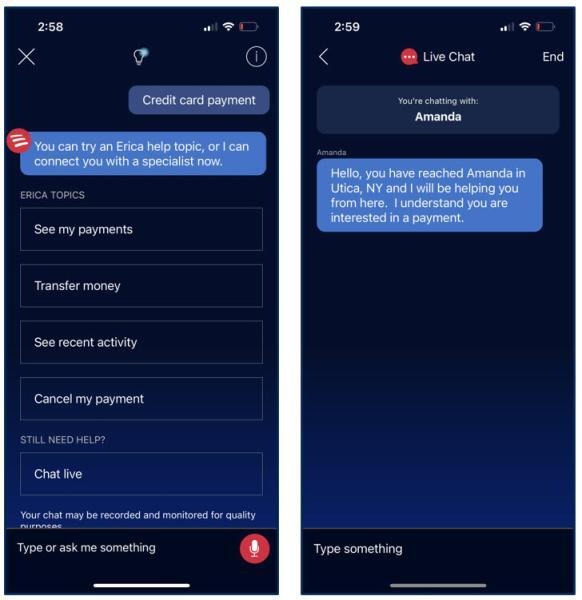

Bank of America's AI customer service agent, Erica, has handled more than 3 billion customer interactions since launch, processing approximately 58 million interactions per month as of August 2025. The architecture behind the handoff gets the credit.

Erica operates against a library of roughly 700 topics, using supervised machine learning rather than generative AI. The constraint is deliberate. It gives Bank of America precise control over what the AI customer service agent attempts to resolve and, more importantly, when it stops trying. When Erica escalates, it transfers the full interaction context to the receiving agent.

"Erica understands that that's an emotionally important conversation. So the best course of action is to create a connection right back to an agent."

—Jorge Camargo, Head of Digital Platforms, Bank of America.

The system also detects emotional signals. Conversations involving financial distress, bereavement, or signs of vulnerability trigger specific routing protocols. The agent who receives that transfer doesn’t just know what the customer needs, but what state they're in.

That changes everything about how the conversation begins.

The governance principle here is architectural. Erica's escalation quality is a direct product of the constraints of what it handles autonomously. The goal is appropriate escalation, with full context, every time.

How Does Oscar Health’s Hard Stops Protect Patients?

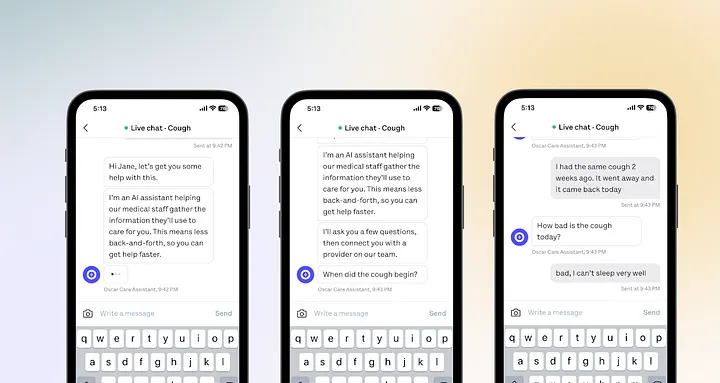

Oscar Health operates in an environment where a poorly constructed AI response carries significant problems.

Oscar's Superagent is an orchestration of language models and internal APIs, built to answer complex health insurance questions in real time. It layers intent classification, targeted information retrieval, and calls to Oscar's live operational systems to synthesize answers with supporting citations. The architecture has a specific safeguard built in: each sub-agent removes itself from the flow if it isn't confident in its own response, ensuring only high-confidence data reaches the member or human agent.

That constraint is the escalation design. When the system isn't certain, it stops and routes to a human, rather than guessing and getting it wrong.

The results are measurable. Oscar's claims assistant reduced the time to resolve escalations by 50%, with accuracy on par with or better than human agents, and the company expects to automate investigations for at least 4,000 tickets per month. Human agents using Superagent reported an 82.6% satisfaction rate. The performance gains come directly from knowing precisely where the AI's boundaries are and engineering the handoff to happen cleanly at those boundaries every time.

Do Verizon’s AI Feedback Loops Make Its System Smarter?

Verizon treats every transfer as a data point.

The system tags and tracks every handoff, feeding outcomes back into the learning algorithm. Issues that consistently require escalation get flagged for retraining. Customer signals that reliably predict the need for a human customer service representative become a routing trigger. The system gets better with each interaction because of what it learns from the edges of its capabilities.

"AI is most effective when human agents can use it as a 'sixth sense' or as an 'angel on the shoulder.'"

—Daniel Lawson, Verizon Business.

The goal, as the company describes it, is a "sixth sense" model for human agents, arriving at an escalation already armed with context, sentiment data, and predicted next steps. The agent doesn't ask the customer to catch them up. They already know.

The result is a system that reduces the conditions that create bad escalations in the first place.

What Is the Future of AI in Customer Service?

With AI failure statistics painting a pessimistic future (Gartner predicts more than 40% of agentic AI projects may be cancelled by the end of 2027, as one example), success stories across industries point to infrastructure as the differentiating factor. In customer service, context loss is a failure of the escalation infrastructure.

Gartner also predicts agentic AI will autonomously resolve 80% of common customer service issues without human intervention by 2029, driving 30% reductions in operational costs. That future is real, but only if customer trust is won at the AI-to-human handoff stage.

.avif)

.svg)

_0000_Layer-2.png)