A Smarter Enterprise AI Strategy Starts With Knowing When to Stop

A smarter enterprise AI strategy means knowing when to stop. Explore a decision framework for pivoting or exiting failing AI initiatives before sunk costs and stalled pilots drain resources.

A disciplined enterprise AI strategy requires clear stop signals, especially when 42% of enterprises terminate most AI projects and nearly 80% fail to deliver measurable ROI.

AI initiatives linger due to sunk costs, overestimated readiness, where 94% of enterprises express confidence but only 22% demonstrate accuracy, weak integration with just 1% reaching maturity, and low adoption.

Leaders need a structured decision framework with funding thresholds and governance checkpoints to decide when to scale, pivot, or terminate before resources are drained.

For years, Dojo was supposed to be Tesla’s secret weapon. Inside Tesla, it wasn’t just another infrastructure project; it was viewed as the foundation of an AI empire. A custom-built supercomputer powered by Tesla-designed chips, Dojo was engineered to train the neural networks behind autonomous driving and robotics at an unprecedented scale.

At one point, Morgan Stanley analysts projected it could add $500 billion to Tesla’s valuation. And then, it was shut down.

In August 2025, CEO Elon Musk made a decisive call: disband the Dojo team, reassign its engineers, and pivot Tesla’s AI strategy away from large-scale internal training infrastructure. What had once been positioned as Tesla’s defining AI advantage was no longer the priority.

The move marked a rare moment of strategic recalibration for a company known for doubling down on audacious bets. Dojo had symbolized Tesla’s ambition to control the full AI stack, from silicon to software, and to build a structural advantage competitors could not easily replicate. It was a long shot with asymmetric upside: if successful, Tesla wouldn’t just build better cars; it would own the infrastructure powering autonomy and robotics.

But enterprise AI at this scale is unforgiving. Custom chip development compounds technical risk. Massive infrastructure investments magnify capital exposure. Talent concentration increases execution fragility. As complexity mounted and the gap between investment and measurable business impact widened, the original vision began colliding with operational reality.

Shutting down Dojo wasn’t simply a cost decision. It was a strategic reset: a signal that even the boldest AI ambitions must ultimately answer to execution discipline and return on capital.

And this pattern extends far beyond Tesla. Across industries, more than 42% of AI projects fail as enterprises struggle to move beyond pilots and deliver production-ready systems.

Yet most enterprise AI initiatives lack a structured way to make those pivot-or-exit decisions. Without objective benchmarks, leaders struggle to assess progress, recognize early warning signs, and exit before costs escalate.

What does enterprise transformation mean in this context? It is not simply scaling new technology. It is the disciplined ability to align AI investments with business outcomes and to recalibrate when they no longer deliver value. Without that clarity, ambition easily outpaces execution.

“A tolerance for failure creates space for better judgment, because the hardest decision is knowing when a project no longer serves the business.”

— Udit Pahwa, Chief Information Officer at Blue Star Limited

In this article, we’ll see how a structured decision framework can help leaders identify and stop failing AI product strategies before sunk costs and delays drain critical resources.

The Cost of AI Product Strategies That Refuse to Die

AI projects rarely fail all at once but fade slowly. Teams stay committed, budgets keep flowing, and leaders wait for a breakthrough that never fully arrives. Without clearly defined KPIs or success metrics, AI projects linger far beyond their useful life, driven more by hope than evidence.

This hesitation is understandable. An enterprise AI strategy can be complex, expensive, and uncertain. Pulling the plug too early risks missing long-term gains. But waiting too long often locks organizations into cycles of rising cost and diminishing returns. That tension explains why many enterprises struggle to make timely decisions.

“The challenge is people keep going down the path of not pulling the plug, because they always hope that there’s a next breakthrough right around the corner.”

— Andreas Welsch, AI Consultant and former Vice President and Head of Marketing for AI at SAP

Shorter, time-bound pilots with regular evaluations help break this pattern. By introducing fixed checkpoints, leaders can assess whether projects are truly moving toward business goals or simply accumulating technical debt. Without these guardrails, early optimism turns into prolonged uncertainty.

That’s why so many AI product strategies stall after showing early promise. For instance, Gartner estimates that 50% of generative AI projects will be abandoned after proof of concept, often due to poor data quality, escalating costs, weak governance, or unclear business value. Each abandoned project represents not just lost investment, but months of misdirected effort.

When a failing enterprise AI strategy drags on, the damage compounds. Organizations lose capital that could fuel higher-impact initiatives. Talent gets tied up in low-return work. Momentum slows, and confidence in future AI investments erodes. Over time, this erosion becomes a structural barrier to enterprise AI transformation.

The Four Traps That Can Keep A Failing Enterprise AI Strategy Alive

Enterprise AI transformations rarely fail because they lack AI initiatives. They fail because the systems, incentives, and assumptions designed to protect ongoing AI projects often prevent timely course corrections.

Without clear business goals, measurable ROI, and structured checkpoints, an enterprise AI strategy can linger long after its potential has been realized, consuming money, talent, and time.

Here are four common traps that keep an AI product strategy alive even when it no longer delivers meaningful value:

1. The Sunk Cost Trap: Many AI initiatives roll out without a clear business case or ROI metrics, leading to overspending and projects that are difficult to shut down once large investments have been made. For example, Capgemini halted a client’s GenAI-powered chatbot project after projected annual costs reached $25 million, largely due to data consumption, without delivering a corresponding business return. This shows that not all AI implementations deliver a positive ROI.

2. The Data Readiness Illusion: Many AI initiatives fail not because of the technology, but because organizations and leaders overestimate their AI preparedness. Data readiness is often assumed rather than tested. Having large amounts of data creates confidence, but volume alone does not mean the data is clean, representative, or suitable for the intended use case. IBM’s Watson for Oncology is a good example. The system was designed to help doctors recommend cancer treatments, but it was trained largely on data from a single institution. When introduced into different hospitals and clinical environments, it struggled to account for variations in practice and patient populations. The issue was not the project's ambition, but the limitations of the data supporting it. Without carefully assessing whether enterprise data is complete, diverse, and reliable, AI initiatives can continue to consume resources while the underlying problem remains unresolved.

3. The Integration Complexity Trap: Many AI projects stall because embedding new technology into workflows, systems, and decision-making processes is far harder than anticipated. Often, a model that performs well in a controlled pilot must eventually connect to legacy systems, interact with live data pipelines, satisfy security and compliance requirements, and fit within existing workflows. Each layer introduces new dependencies and stakeholders, from IT and legal to operations and frontline teams. What begins as a promising proof of concept can quickly become a multi-department coordination effort, where delays compound and redesigns become a reality. That’s why, although 92% of companies plan to increase AI spending, only 1% report achieving mature deployments where AI is fully integrated and delivering measurable business impact. Without anticipating this integration burden, initiatives often remain stuck between pilot success and operational scale.

4. The Adoption & Behavior Trap: Employee trust and engagement play a crucial role in the success of any AI product strategy. Despite investments in technology, many organizations struggle to get teams to consistently use AI in real workflows. The gap is clear: 57% of business units in high-maturity organizations trust and are ready to use AI, compared with just 14% in low-maturity organizations, underscoring that adoption and behavioral alignment are key to realizing business value.

Together, these traps can keep an enterprise AI strategy stuck, preventing organizations from realizing real business impact unless they actively address them.

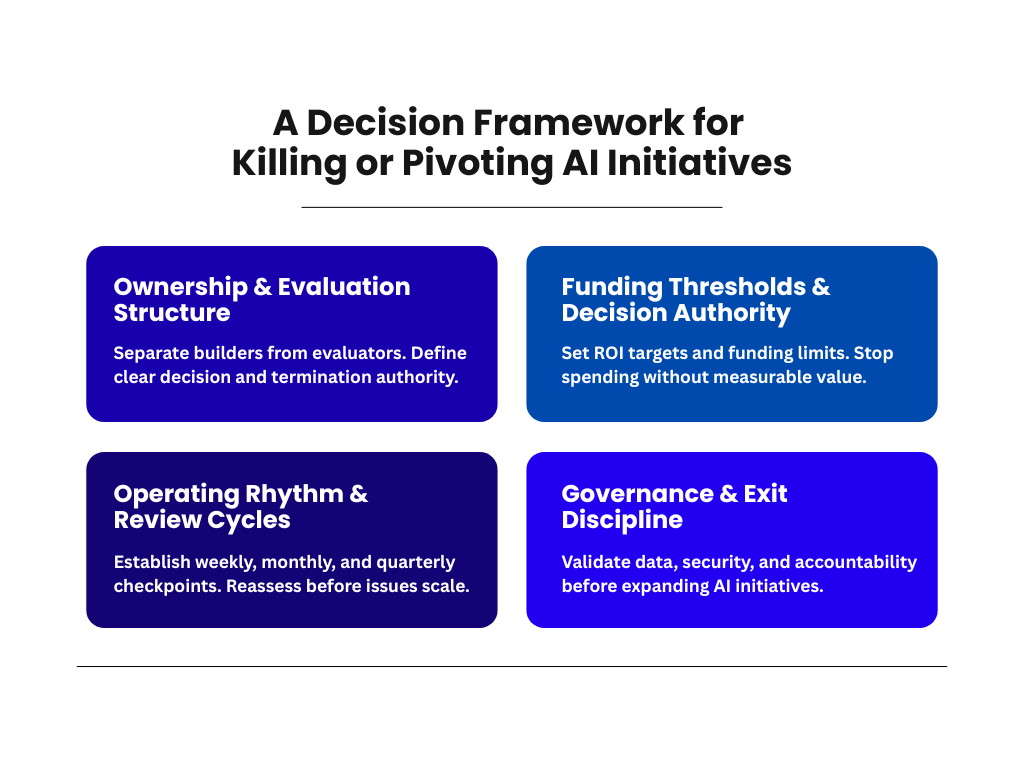

A Decision Framework for Killing or Pivoting AI Initiatives

The four traps above reveal the same underlying problem: most organizations lack a clear mechanism for deciding when an AI initiative should continue, pivot, or stop. Sunk costs blur judgment. Data gaps go unchallenged. Integration delays are tolerated. Weak adoption gets reframed as “early stage.” Without structured checkpoints, momentum replaces evidence.

A decision framework can correct this. It introduces clear ownership, defined funding limits, regular review cycles, and governance guardrails that force objective evaluation. Instead of waiting for a project to visibly fail, leaders assess progress against predefined milestones and measurable business outcomes. If those thresholds are not met, action is required.

Next, let’s take a closer look at the four pillars that outline how to build that discipline into AI initiatives before optimism and investment make course correction unnecessarily difficult.

1. Ownership and Evaluation Structure

AI initiatives are more likely to persist when the same teams responsible for building a system are also responsible for judging its progress. Emotional investment, internal momentum, and sunk costs can cloud objective evaluation. To counter this, organizations can separate builders from evaluators and define clear decision and termination authority at the executive level.

Apple’s cancellation of its decade-long electric vehicle project, known internally as Project Titan, illustrates why this structure matters. Launched around 2014, the initiative grew to nearly 2,000 employees and consumed billions of dollars over ten years.

Over time, the project faced repeated leadership changes and shifting technical ambitions. Internal discussions reportedly placed the vehicle’s projected price near $100,000, raising concerns that profit margins would fall well below Apple’s historical standards. At the same time, the company was spending hundreds of millions of dollars annually on a product that might never reach the market.

Ultimately, senior executives made the decision to wind down the program and reassign many team members to generative AI initiatives. The key point is structural: the termination authority did not sit within the project itself. When builders are not the sole evaluators, and when executive leaders have clear authority to stop funding, organizations can redirect capital and talent before long-term ambition becomes structural drag.

2. Funding Thresholds and Decision Authority

In many organizations, AI initiatives move forward without clearly defined financial guardrails or formal investment checkpoints. Budgets expand incrementally, often because work is already underway rather than because measurable results justify continued spending.

Over time, momentum begins to outweigh business logic, and projects persist to defend earlier commitments instead of delivering demonstrable value. To prevent this, organizations can set ROI targets and funding limits and stop spending without measurable value.

For instance, IBM’s Watson Health is an example of the consequences of allowing investment to continue without enforced thresholds. Beginning in the early 2010s, IBM made healthcare AI a strategic priority, acquiring multiple health data companies and positioning Watson as a transformative solution for cancer treatment and clinical decision support.

The initiative was framed internally as a bridge to IBM’s future in artificial intelligence. Yet as adoption challenges and performance concerns emerged, funding continued, and the program expanded rather than contracted.

“They spent way more money building this than they got back. Just the acquisitions alone cost them $5 billion. That it was sold so many years later, after so much effort - 7,000 employees at one point - means that this was a total failure that they needed to just cut their losses and move on.”

— Casey Ross, Chief Investigative Reporter for Data and Technology at STAT

Watson Health demonstrates why AI investments need to have explicit ROI targets and enforced funding limits. Without disciplined financial checkpoints, organizations can spend years sustaining initiatives that no longer justify continued investment.

3. Operating Rhythm and Review Cycles

Without a disciplined operating rhythm, even well-funded AI initiatives can drift into structural risk. Growth feels like progress. Headcount expands, roadmaps widen, and new features move into development, yet the underlying assumptions about integration, authority, and feasibility remain untested. Problems surface only after scale makes them expensive.

Volkswagen’s software unit, Cariad, reflects this dynamic. Launched in 2020 to centralize software development across the Volkswagen Group, it was tasked with building a unified vehicle software architecture while advancing next-generation driver assistance and software-defined vehicle capabilities. The ambition was to consolidate systems across Audi, Porsche, and Volkswagen under one integrated platform.

Instead of sequencing the transformation, Cariad expanded rapidly while inheriting troubled legacy platforms involving nearly 200 suppliers. Within months, the organization grew to roughly 6,000 employees. Teams were expected to stabilize existing architectures and design future systems simultaneously. Brand-specific structures persisted inside the new unit, and authority over budgets and priorities remained fragmented. Scope widened before integration stabilized.

As delays emerged, oversight intensified. Task forces multiplied. Reporting layers expanded. Insiders described weeks filled with status meetings while underlying architectural dependencies remained unresolved. Model launches were postponed as software readiness slipped. By 2025, Volkswagen had invested approximately €14 billion in the effort and initiated significant restructuring, including thousands of job reductions.

The issue wasn't just the vision. It was an expansion without recurring, structured reassessment of sequencing, cross-brand dependencies, and decision rights. Activity increased, but disciplined review cycles didn't narrow risk early enough.

Status updates can create the appearance of motion. A disciplined operating rhythm forces leaders to test assumptions, re-evaluate scope, and reset priorities before complexity compounds. Weekly, monthly, and quarterly checkpoints create space to reassess viability while change is still manageable. Without that cadence, large AI and software programs accumulate hidden structural risk until correction becomes costly and disruptive rather than incremental.

4. Governance and Exit Discipline

Scaling AI faster than governance can introduce hidden risks. Data quality, ownership, security, and accountability are foundational requirements, not afterthoughts. When those elements lag behind technical deployment, organizations risk compounding operational and compliance exposure. In such cases, acceleration may amplify fragility rather than value.

Before expanding AI initiatives, leadership can validate data, security, and accountability. These aren’t downstream cleanup tasks. They are prerequisites for responsible scale.

For example, Salesforce executives have publicly acknowledged governance breakdown as a common reason enterprise AI initiatives struggle. Organizations frequently attempt to expand AI capabilities while operating with fragmented data environments, unclear ownership structures, and uneven security controls. The result is not simply technical debt, but systemic risk that grows as deployment scales.

“We're seeing a lot of these AI projects really failing, and a lot of it's because customers still have fragmented data, they still have weak governance, they still have poor security.”

— Desiree Motamedi, Senior Vice President and Chief Marketing Officer, Salesforce.

In response, Salesforce introduced what it describes as an AI trust layer, intended to centralize governance controls, embed security safeguards, and formalize accountability before broader rollout.

The broader lesson is straightforward. Governance isn’t optional. It is a gate. Before expanding any AI initiative, leadership must ask: Are we structurally ready to support this at scale? If the answer is no, the most strategic move may be to pause and strengthen the foundation before moving forward.

Enterprise Case Studies on Scaling, Pivoting, or Exiting AI

Kill-or-pivot AI decisions aren’t theoretical. They unfold inside real organizations, often under intense pressure, where leaders must decide whether to double down, change course, or walk away. The following case studies show how these moments play out across different industries:

- Amazon: In 2018, Amazon Web Services launched an AI research lab in Shanghai to advance enterprise artificial intelligence and strengthen its cloud presence in China. The lab focused on applied machine learning research as part of AWS’s broader global expansion strategy. Over time, however, rising operational complexity, escalating geopolitical tensions between the United States and China, and increased internal pressure to demonstrate long-term return on investment complicated the initiative. As generative AI began reshaping enterprise demand and AWS undertook broader cost controls and restructuring, leadership reassessed where AI spending would generate the greatest strategic value. In 2025, Amazon shut down the Shanghai AI lab, reassigning or dissolving teams and consolidating development around core platforms, data centers, and compute priorities. An AWS spokesperson described the move as a necessary step to optimize resources while continuing to invest strategically, reflecting CEO Andy Jassy’s emphasis on efficiency, disciplined capital allocation, and tighter alignment between AI development and measurable enterprise returns.

- Ocado Group: Ocado Group is a UK-based online grocery retailer and technology company that built its growth strategy around proprietary AI-driven warehouse automation. Known for developing advanced robotic systems for picking, packing, and inventory management, Ocado positioned its 2018 partnership with Kroger as a cornerstone of its expansion into the US market. Automated fulfillment centers were intended to demonstrate the scalability of its capital-intensive retail technology platform and validate its global ambitions. However, the rollout fell short of financial expectations. Several Kroger-linked warehouse sites were closed, investor pressure intensified, and Ocado’s shares declined sharply in 2025 amid concerns about whether the technology’s costs justified its returns. Rather than exiting automation, Ocado allowed exclusivity terms in its US and other supermarket agreements to lapse, enabling it to offer its warehouse platform to a broader range of retailers worldwide. The decision marked a strategic shift toward diversification, reduced partnership constraints, and stronger capital discipline.

Enterprise AI Strategy: Scale with Discipline, Exit with Confidence

Tesla’s decision to shut down Dojo underscores a central lesson in enterprise AI. What began as a defining in-house supercomputer, positioned as a long-term strategic pillar, ultimately had to be measured against business value, governance fit, and execution reality. By stepping back from a capital-heavy, high-complexity program, Tesla redirected focus toward AI efforts with clearer alignment and faster operational impact.

Simply put, enterprise AI transformation now depends on execution discipline. Nearly 80% of AI projects fail, highlighting how systemic the risk has become. Enterprise AI ambition is accelerating, but progress depends on structured decision frameworks that help leaders scale what works, pivot what stalls, and decisively exit what no longer serves the business.

.avif)

Frequent Asked Questions

What is the role of AI in enterprise transformation?

AI plays a central role in enterprise transformation by improving performance across business functions. It enables automation of administrative tasks, supports hyperpersonalized customer experiences, and modernizes IT processes through capabilities such as automated code generation.

What is an AI strategy for the enterprise?

An AI strategy helps organizations intentionally harness AI capabilities and align initiatives with business objectives. It acts as a guiding compass, ensuring AI efforts contribute meaningfully to the company’s overall success.

What are the 4 pillars of AI strategy?

The four pillars of an AI strategy are Operating Model, Location Strategy, Organization Structure, and KPIs. Together, they form the foundation that makes AI initiatives scalable, sustainable, and impactful, enabling enterprises to realize measurable business value.

What is an enterprise AI strategy?

An enterprise AI strategy is a plan that defines how a company will use AI technologies like machine learning, NLP, and generative AI to meet its business goals. It aligns AI initiatives with objectives to deliver measurable impact and helps the organization gain a competitive edge.

.svg)

_0000_Layer-2.png)