Inside How General Mills, HPE, and Mastercard Built Their AI Business Case

Why do most AI projects fail at the CFO’s desk? The business case for AI is often built the wrong way. See how enterprises design cases that secure approval.

Only 12% of CEOs report both cost and revenue benefits from AI despite widespread enterprise adoption.

AI proposals get approved when the cost of inaction is quantified and tied to a measurable financial impact.

General Mills, HPE, and Mastercard structured AI investments around cost, revenue, and risk before selecting models.

At a leadership event in San Francisco, Sasan Goodarzi, CEO of Intuit, interrupted the usual narrative around artificial intelligence with a blunt observation.

"Customers don't care about AI. Everybody talks about AI, but the reality is a consumer is looking to increase their cash flow… A business is trying to get more customers. They're trying to manage their customers, sell them more services."

— Sasan Goodarzi, CEO, Intuit

The comment stood in contrast to the conversation happening around him. A conversation dominated by models, platforms, and competitive positioning.

Intuit, the company behind TurboTax, Credit Karma, and QuickBooks, serves millions of consumers and small businesses. And those users aren’t thinking about AI. They are thinking about cash flow, customers, and getting paid.

Simply put, AI isn’t the story; the business case for AI is built on measurable business outcomes.

This mindset has shaped how Intuit approaches AI investment across its platform. Instead of treating AI as a technology initiative, the company has consistently tied it to measurable operational and financial results that matter to customers and leadership, reflecting the broader artificial intelligence implications for business strategy.

By fiscal year 2025, that discipline was visible in the numbers. CFO Sandeep Aujla reported that AI investments were on track to deliver nearly $90 million in annualized efficiencies, slightly ahead of projections. The figure was presented as a financial outcome, not a technology milestone.

The operational impact was equally clear. For instance, Intuit Assist helped reduce TurboTax support contact rates by 20%. More than 200 partnerships automated 90% of the data entering a tax filing, up from 68% the previous year. AI agents were also saving customers an average of 12 hours each month, helping them get paid five days sooner and increasing the likelihood of full payment by 10%.

As Chief AI Officer, Ashok Srivastava explained, these numbers matter because they translate directly into business time and productivity for customers.

"As a person who's run small businesses in the past, I can tell you numbers like that are very meaningful. Twelve more hours means 12 more hours that I can spend building my products, understanding my customers."

— Ashok Srivastava, Chief AI Officer, Intuit

Intuit had access to the same models, the same uncertainty, and the same pressure from boards and investors as every other enterprise. What it did differently was structural: finance was embedded early, outcomes were defined upfront, and the business case for AI came before the model.

Which leads to a broader question many enterprises are still struggling to answer: What is an example of AI in a business case study that actually gets CFO approval?

In this article, we break down how leading enterprises build AI business cases that get approved, why most fail before the first slide, and what it takes to move AI from pilot to production at scale.

Why CFO Scrutiny Over the Business Case for AI Has Sharpened in 2026

Investment in generative AI (Gen AI) for business is accelerating across large enterprises. For example, generative AI budgets across large organizations are projected to more than double from last year, reaching $7.45 million. So, the boards are no longer asking whether companies are investing in AI; rather, they ask about the measurable financial value these investments deliver.

The challenge is that generative AI for business transformation behaves very differently from traditional enterprise software. Seat-based SaaS licenses come with predictable costs and clear value projections before deployment. Gen AI runs on consumption-based pricing, fluctuating usage, and indirect productivity gains that are harder to measure. CFOs are being asked to approve budgets for a technology that behaves nothing like the software spend they have always known how to evaluate.

This financial unpredictability becomes clearer as AI moves from pilot to scale. By 2030, 80% of organizations are expected to shift from large software engineering teams to smaller ones, which will bring new infrastructure, talent, and workflow costs that most AI budgets have never accounted for.

Scaling generative AI for business among knowledge workers reveals another issue: cost underestimation often reaches 500% to 1,000%. This gap widens further with agentic AI, which requires 5 to 30 times more tokens per task than standard GenAI tools, thereby significantly increasing overall inference and operational costs.

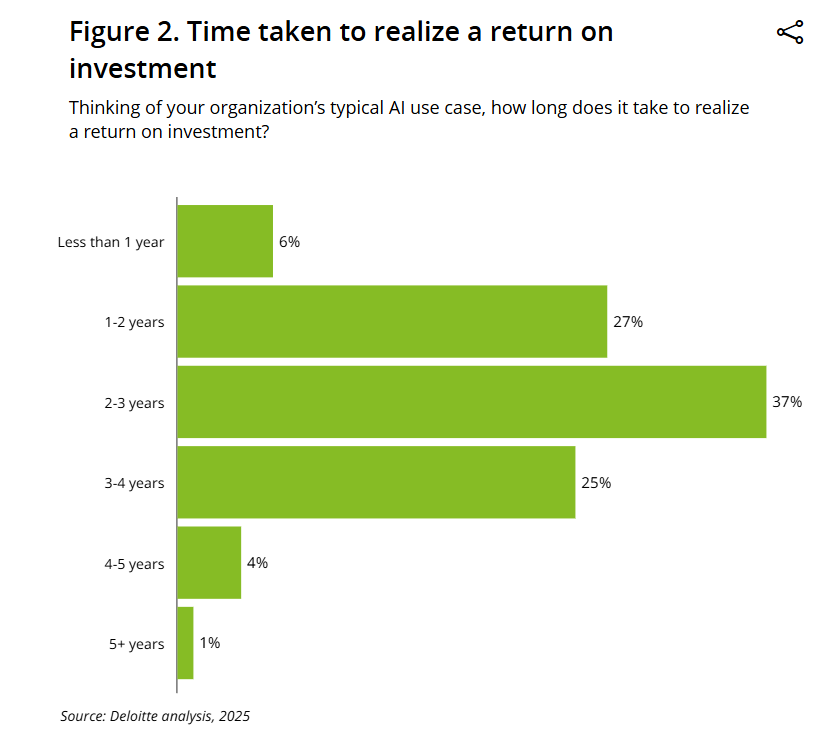

Returns are not keeping pace with this spending curve. For example, Deloitte's 2025 analysis shows that only 6% of organizations realize AI returns in under a year, while the largest share, 37%, takes 2 to 3 years. Only 12% of CEOs report both cost and revenue benefits from AI, while 56% say they have not yet seen a significant financial impact.

This gap is now shaping how finance leaders respond to AI proposals, especially for generative AI and for business leaders who must justify large and uncertain technology investments. Around 37% of CFOs have already paused some capital spending while continuing to protect AI budgets. The shift is away from broad experimentation and toward investments with clear financial outcomes that can be defended before boards and shareholders.

"I think a lot of CFOs like me are having to balance where to tighten the belt and where to invest to drive long-term growth and shareholder value. A blank check for AI makes that very difficult to do."

— Steve Bailey, CFO, Match Group

Across these patterns, the signal is consistent. The constraint isn’t the model or the technology itself. The real issue is the lack of a clear financial framework for AI initiatives before deployment, making it difficult for CFOs to evaluate risk, cost, and return with confidence.

Next, we’ll discuss three structural failure modes that show how this gap in the business case for AI persists across enterprises.

Why Most AI Business Case Studies Never Make It Past the CFO

The fear CFOs have about AI proposals is rarely technical. Most proposals aren’t rejected because the model is wrong or the use case is irrelevant. They are rejected because the business case is built in the wrong direction, starting with technology and trying to justify financial value later.

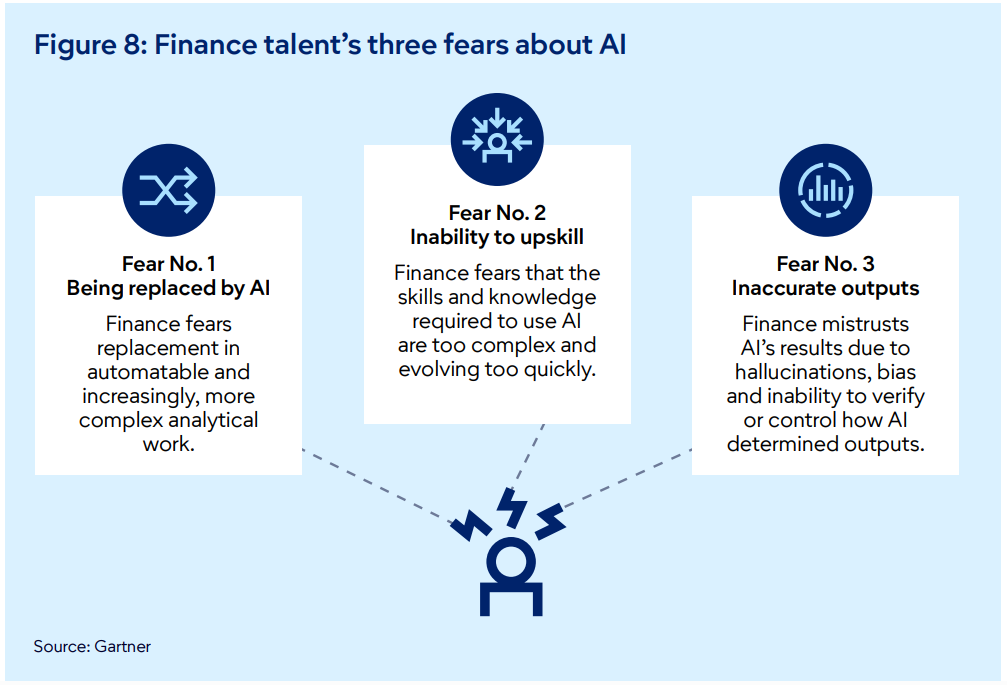

Figure 8 in Gartner's Finance 2030 report clearly shows why. Finance teams carry three specific fears about AI: being replaced, inability to upskill, and inaccurate outputs, each of which makes unquantified proposals harder to approve and easier to reject.

CFOs evaluate capital allocation decisions on cost, return, and risk, not on model capability. By the time the proposal reaches the CFO, it often reads like a technology solution seeking financial justification rather than a clearly defined business outcome, making ROI seem like an assumption rather than a measurable starting point.

Here are three structural failure modes behind why the business case for AI is often rejected:

- No Financial Baseline Before Deployment: Most AI proposals claim productivity improvements or cost reductions without documenting the current financial baseline. This leaves CFOs without a clear denominator to validate ROI or track post-deployment accountability. It creates a structural gap where teams move forward with deployment but later struggle to prove measurable impact when boards ask for before-and-after results. Nearly 50% of generative AI for business projects are abandoned after the proof-of-concept stage, not because the technology fails, but because business value and measurable success metrics were never clearly defined.

- Technology Framing Instead of Financial-Lever Framing: AI proposals frequently focus on system capabilities without linking them to a specific financial lever. This turns the business case into a technology narrative rather than a capital allocation request. CFOs look for cost reduction, revenue acceleration, or risk mitigation, yet most proposals are built by technology teams first and reviewed by finance later, resulting in weak financial framing. This gap explains why 88% of organizations use AI in at least one business function, while only about 39% report measurable enterprise-level EBIT impact, showing that adoption is widespread but financial outcomes remain limited.

- Risk Reduction Treated as a Compliance Footnote: AI proposals often mention governance and compliance risks but rarely assign a clear financial value to risk reduction, leaving the downside unquantified in capital allocation decisions. When potential losses, operational exposure, or compliance costs aren’t modeled in financial terms, CFOs tend to default to caution because the proposal asks for investment while leaving uncertainty unresolved. Only 14% of finance chiefs report seeing a clear, measurable impact from AI investments to date, which shows how difficult it is to secure approval when the financial implications of risk aren’t clearly documented.

Next, we’ll explore three enterprise AI business case studies that isolate these failure modes and illustrate how leading organizations structured the business case for AI around financial baselines, financial levers, and quantified risk reduction.

How General Mills Made Finance the Scorekeeper for AI

Most enterprise AI proposals reach the CFO’s desk after key technology decisions have already been made. The model is selected, the use case is defined, and finance is asked to approve a budget for something it didn’t help design. Without a financial baseline established before deployment, there is no clear denominator for ROI and no structured way to measure results later.

General Mills encountered this challenge as it began scaling AI across its operations. The company had been increasing digital and data investments since 2019, and the number of AI initiatives was steadily growing. However, investment decisions lacked a consistent financial measurement framework to connect these technology efforts to measurable business outcomes.

To fix this, General Mills changed the structure before scaling further. Chief Digital and Technology Officer Jaime Montemayor formed a cross-functional leadership group that included HR, legal, finance, and supply chain leaders. This brought finance into the design stage early and ensured that AI initiatives were evaluated against clear financial metrics and measurable business outcomes.

This structure made finance responsible for tracking performance and aligning technology investments with measurable returns, making financial accountability part of the deployment process from the start.

“The finance team is the one that is helping us keep the score.”

— Jaime Montemayor, Chief Digital and Technology Officer, General Mills

This made the CFO a co-author of the business case for AI rather than a late-stage approver, ensuring that financial baselines and measurement criteria were defined before any AI model went live.

The impact became visible in February 2025, when CFO Kofi Bruce linked AI initiatives directly to financial outcomes at an investor conference. AI models analyzing more than 5,000 daily shipments generated over $20 million in savings since fiscal 2024, while real-time manufacturing data was projected to deliver more than $50 million in waste reduction.

These results were defensible because the financial baseline was defined before deployment, allowing AI performance to be measured against clear, finance-approved metrics.

HPE Turned the Business Case for AI Into a Financial Decision

Proposals pitching the business case for AI often fail CFO review, not because the technology is wrong, but because the framing is. When proposals lead with what the model does rather than which financial problem it solves, they read like technology pitches, and by the time finance is asked to approve them, the financial lever is missing from the conversation.

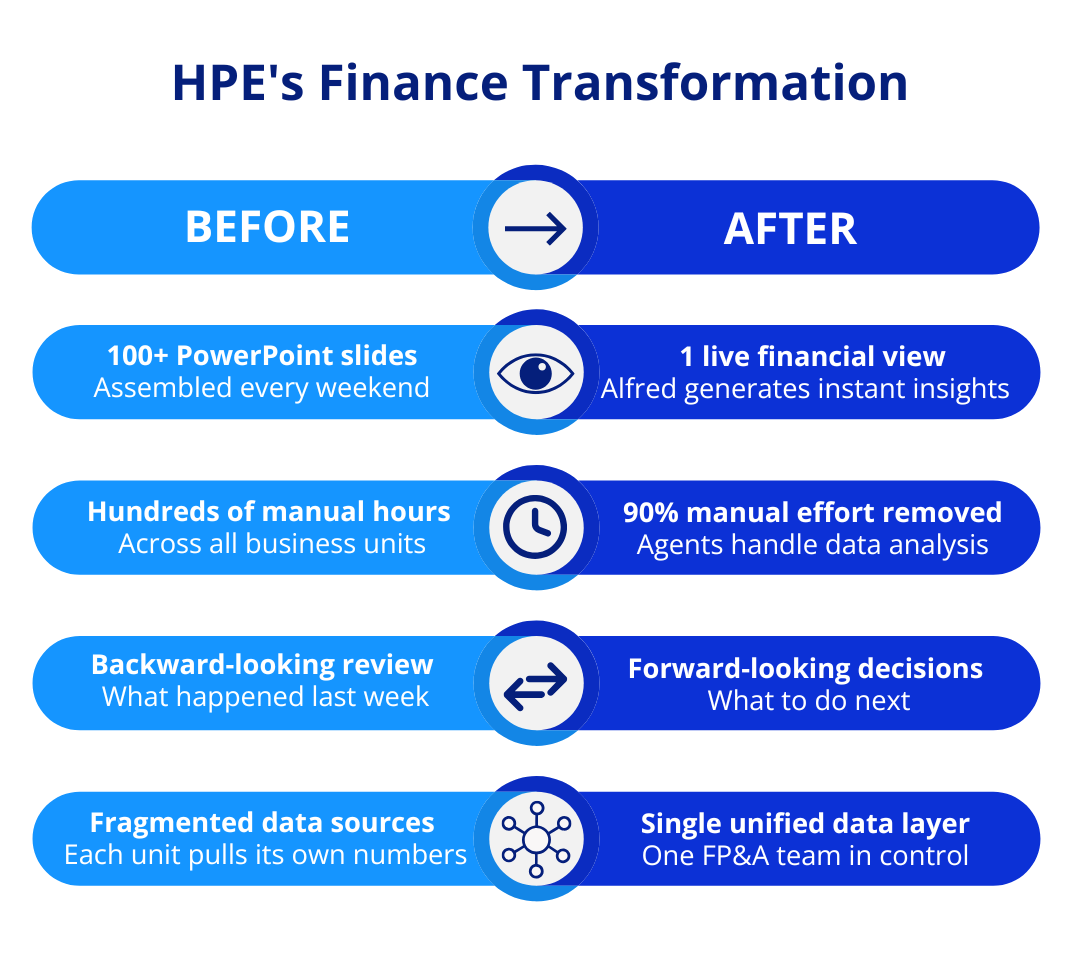

For instance, HPE faced this challenge in its weekly operational performance reviews across business units. Preparing more than 100 PowerPoint slides every weekend required hundreds of hours of manual effort from finance teams.

The 90-minute Monday review call then focused on reviewing this compiled data, making the process largely backward-looking and leaving limited time for forward-looking financial decisions.

CFO Marie Myers framed this as a financial and operational problem, not a technology one. The goal was to move the finance function from reporting what happened to guiding what happens next.

Before introducing AI, the company centralized Monday review preparation within a single FP&A organization, bringing business units into a single coordinated workflow rather than having each team pull its own data. This reduced fragmentation and created a standardized data foundation. AI was introduced only after this structure was in place.

HPE partnered with Deloitte to build CFO Insights, an agentic AI platform running on HPE’s Private Cloud AI infrastructure, known internally as Alfred. AI agents now handle the analytical work that analysts previously performed manually, pulling shipment and revenue data and running the required calculations. The results are presented in a standardized, live performance view that directs leadership’s attention to decision points instead of slide preparation.

"AI isn't on the horizon; it's here. In 2026, AI will move beyond experimentation to become a core enabler of finance operations. Success will hinge on strong governance, human oversight, ROI discipline, and building digital acumen that empowers talent."

— Marie Myers, EVP and CFO, Hewlett Packard Enterprise

HPE’S financial outcomes were measurable and specific. Alfred removed approximately 90% of the manual effort from the weekly review. Reporting cycle time fell by about 40%. Processing costs dropped by at least 25%. The Monday call shifted from a backward-looking summary to a forward-looking discussion about what to do next.

HPE's finance transformation moved from fragmented manual reporting to a single live financial view.

The starting point here was never the model but the financial problem the model needed to solve. By defining the financial lever first and building AI around it, HPE made the CFO the driver of financial discipline and made the AI proposal a defensible capital allocation decision from the outset.

How Mastercard Turned Risk Into an AI Investment Case

Generative AI for business transformation proposals often mentions risk, but they rarely quantify it. Risk usually appears as a compliance requirement or a general statement about potential exposure, making it difficult for CFOs to treat risk reduction as a financial lever.

Mastercard approached its AI investment differently by starting with the financial cost of external threats. In 2024, cybercrime was projected to cost $9.2 trillion globally, while American consumers lost a record $10 billion to fraud the previous year, making inaction a measurable financial risk.

Against this backdrop, Mastercard committed $7 billion to cybersecurity over five years. The investment was framed not as compliance, but as a direct financial response to a quantified global threat. The threat was evolving quickly, with AI-enabled fraud such as deepfakes and synthetic identities making traditional rules-based detection systems ineffective.

Mastercard responded by building a more advanced AI-driven detection system. Over time, the company moved from rules-based monitoring to multiple machine learning techniques and eventually to generative AI for business, with models capable of analyzing transaction behavior in real time.

In early 2024, Mastercard launched Decision Intelligence Pro, a generative AI tool that scans one trillion data points in under 50 milliseconds to predict whether a transaction is legitimate. Fraud detection improved by an average of 20%, with some cases seeing gains of up to 300%.

The scale of Mastercard’s global network strengthened the investment logic. Processing around $9 trillion in transactions annually allowed the company to detect fraud patterns across institutions, making network-wide intelligence a financial advantage that individual banks could not replicate.

"We get to see the data not only for a single customer, but we get to see it network-wide. We have trained our models in a manner to be able to, with a high degree of efficacy, identify when there is a network-level fraud event which might be taking place."

— Sachin Mehra, CFO, Mastercard

Mastercard extended this strategy further in September 2024 by acquiring Recorded Future for $2.65 billion, adding real-time global threat intelligence across thousands of organizations and government clients. The logic remained consistent: staying ahead of cyber threats is financially cheaper than responding after losses occur.

When the cost of inaction is quantified and tied to external benchmarks, risk reduction becomes a measurable financial lever. This makes the financial consequences of inaction visible and turns risk management into a defensible AI investment case.

Why CFO-Led AI Strategies Reach Scale

When Intuit's CFO reported nearly $90 million in annualized efficiencies from AI, that number wasn’t a surprise. It was the result of a discipline that started long before any model was selected. Every initiative was tied to a measurable financial outcome before deployment, not after.

That sequence is what separated every approved investment examined here. Not the model, not the vendor, not the use case. The financial baseline came first, the risk was quantified upfront, and the CFO was part of the process from the start, not the last person in the room.

Most AI roadmaps describe what the system does but never define what changes on the P&L. That gap is where proposals stall. Before building the business case for AI, three questions need to be answered: what does the current state cost, what does post-deployment impact deliver in measurable financial terms, and what is the dollar value of the risk being eliminated? If the proposal can’t answer all three, it isn’t ready for CFO review.

.avif)

.svg)

_0000_Layer-2.png)