How Unilever, Epic, and Bank of America Linked AI ROI to the P&L

AI ROI isn’t just about efficiency. Most companies stop there. But when they don’t, and focus on customer outcomes, AI starts driving real revenue and measurable P&L impact.

88% of enterprises deploy AI, but only 6% show real financial impact.

AI programs stall when they prioritize internal efficiency over customer outcomes.

Unilever, Epic, and Bank of America tied AI ROI to outcomes by defining them before building solutions.

Brian Keene had spent the better part of a morning sitting through AI presentations at the Citi Global Technology, Media and Telecommunications Conference in September 2025. From one company to another, the conversation was the same: efficiency gains, automation rates, and cost reduction.

When Keene sat down with Genpact CEO BK Kalra, he asked what enterprise clients had actually been saying about AI profitability over the last 12 to 18 months. Kalra's answer was different from everything else Keene had heard that morning.

“Clients are talking a lot about value creation, not only about cost or productivity."

— BK Kalra, President and CEO, Genpact, Citi Global Technology Conference

It was a small distinction with a large consequence. Boards had approved AI budgets based on efficiency. Enterprises had delivered efficiency. Yet broader data tells a different story. In 2026, 56% of CEOs report no measurable financial benefit from AI, and only 12% say AI has delivered both cost and revenue impact.

The tools weren’t failing. They were just aimed at the wrong outcome. Kalra had a number that showed exactly why.

By the end of 2025, Genpact's Advanced Technology Solutions revenue reached $1.204 billion, up 17% year over year, accounting for more than half of the company's total revenue growth. Genpact got there by building one discipline into every client engagement from the start: define the customer outcome first, then work backward to the technology.

This is the same discipline Amazon refers to as “Working Backwards.” The process requires teams to start with the customer experience they want to create and work backward from there until there is clarity on what to build.

"The primary point of the process is to shift from an internal/company perspective to a customer perspective."

— Colin Bryar and Bill Carr, Amazon employees

Most enterprise AI programs do the opposite. They start with what is easy to automate and hope it creates customer value somewhere downstream. The ones now showing AI on the income statement didn’t start with an AI ROI calculator. They started with the customer outcome and built everything around it.

Which brings the question most CIOs carry into 2026 budget reviews into sharp focus: what is the ROI of AI when efficiency metrics are no longer enough to secure next year's funding? The answer lies in whether AI is making the company more valuable to customers or just delivering AI cost savings that never reach the P&L.

In this article, we’ll break down three structural gaps that kept AI focused on internal efficiency instead of customer outcomes, and how three companies closed each one to turn AI ROI into measurable P&L impact before their next budget review.

Efficiency Gains Have Stopped Being a Credible Measure of AI Profitability

For most of 2024 and 2025, productivity was the primary justification for AI investment. Hours saved, tasks automated, and headcount held steady, forming a clear and measurable case. Boards approved budgets on that basis, and enterprises delivered against it.

But this year, that argument has begun to weaken. A Futurum Group survey of 830 global IT decision makers in early 2026 reflects the shift. In fact, productivity declined by 5.8%, which is typically the leading measure of AI profitability and success.

At the same time, the cost of deploying AI at scale is becoming impossible to ignore. What starts as a pilot expands into enterprise programs that require investment in data infrastructure, integration, internal enablement, and customer-facing products.

As these initiatives grow, so does the total spend. In many cases, the full cost only becomes clear once the program is already underway.

This is what is driving the shift in how AI is evaluated. When the investment reaches the CFO, the conversation changes. Efficiency gains that once justified the program are no longer enough. The question becomes whether the returns justify the cost and where that impact shows up on the income statement.

This shift is also redefining how companies handle AI profitability. In 2025, 80% of enterprises prioritized efficiency. But the highest returns are coming from those that focused on growth and innovation from the start. Efficiency-driven programs are not failing, but they are limited. They reduce costs without necessarily driving business expansion.

The gap between investment and outcomes is becoming harder to ignore. A survey of over 3,200 senior leaders found that while 74% of organizations aim to revenue growth through AI, only 20% have succeeded. Investment is rising, but measurable business results are not keeping pace.

"Some sprayed and prayed rather than systematically asking, 'How will the technology make my company better?'"

— Neil Dhar, Global Managing Partner, IBM Consulting,

Organizations losing board support for AI in 2026 are the ones where its impact remained limited. Without a clear link to business growth, AI becomes an expensive capability rather than a measurable driver of ROI.

The Three Structural Gaps Hiding AI ROI From the Board

AI is already part of how most enterprises operate, with various agentic AI ROI initiatives running across organizations. The harder question, now raised in boardrooms, is why so few of these deployments show up in financial results as an AI ROI.

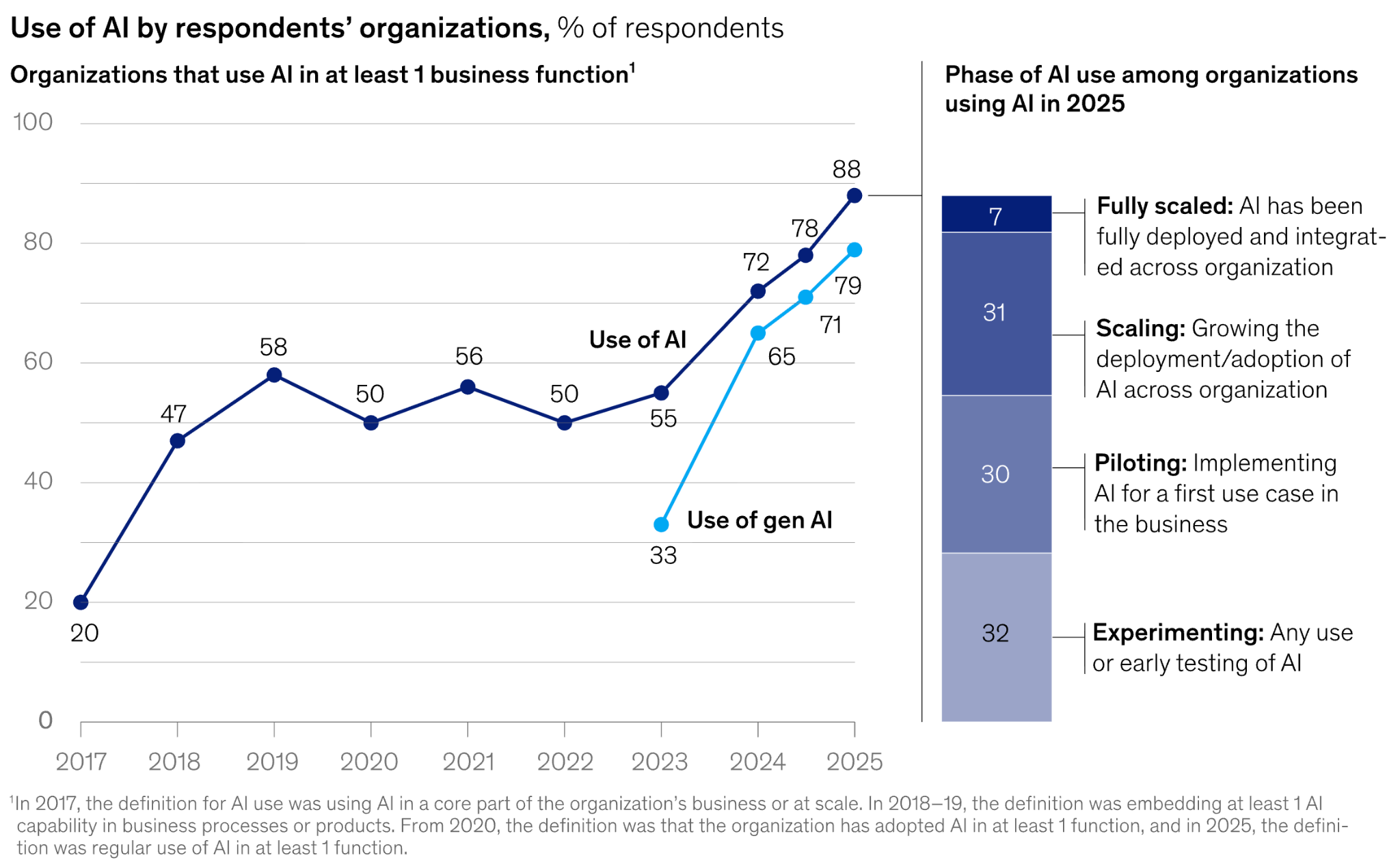

This gap often comes from the structural logic behind how these programs are designed, directed, and measured from the start. For example, McKinsey's State of AI 2025 captures the scale of this gap precisely.

88% of organizations now deploy AI in at least one business function, yet only 6% qualify as high performers, attributing more than 5% of EBIT (Earnings Before Interest and Taxes) directly to AI. Furthermore, only 7% have fully scaled AI across the organization, while the remaining 93% are still experimenting, piloting, or scaling in pockets.

Here are three structural gaps that explain why many AI deployments fail to translate into financial results:

- The Direction Gap: What begins as a customer or revenue-focused initiative often shifts toward internal workflows during execution because they are easier to automate and scale. This pulls AI into back-office efficiency instead of customer impact. Over time, it moves too far from the customer to influence revenue and AI ROI. Only 34% of enterprises use AI to create new products, services, or business models, while most remain focused on efficiency gains.

- The Definition Gap: Many AI programs start without a clear definition of success. There is no agreed customer outcome, no revenue target, and no baseline to measure progress. Teams default to what is easy to build rather than what drives impact. Without defined goals, progress cannot be measured or corrected. Only 14% of global enterprises have a clear AI strategy, while 71% are still working with incomplete or evolving plans, leaving them asking: “How to evaluate ROI on enterprise AI investments?”

- The Attribution Gap: AI impact is difficult to isolate because it is introduced alongside pricing changes, product updates, and operational shifts. Without a baseline, its contribution blends into overall performance. This makes results difficult to prove at the board level. Only 39% of enterprises report any measurable EBIT impact from AI, and most of those are below 5%, reflecting a lack of structured measurement rather than a lack of underlying value.

Next, let’s walk through three case studies that bridge these gaps and show how leading enterprises closed them, turning AI ROI into a measurable P&L impact using a discipline any CIO can apply before the next budget review.

How Did Unilever Keep AI ROI Tied to Revenue Instead of Internal Efficiency?

AI programs often drift toward internal operations, not because teams choose that direction, but because internal workflows are easier to automate. Back-office processes scale first. Field operations follow later. Unfortunately, in many cases, the customer rarely sees the impact.

Unilever faced this risk as it scaled AI initiatives across its global ice cream business, which operates more than 3 million freezer cabinets across retail locations worldwide. The company had no shortage of internal processes to optimize.

But the question leadership chose to prioritize instead was different: how to ensure product availability at the exact moment a customer makes an impulse purchase decision. That question became the foundation for their AI initiative, outlined in their 2024 annual report within the Business Group Review (around page 30).

Under the Growth Action Plan, investment was anchored to customer availability and demand generation rather than internal efficiency. The AI outcome was defined before the technology was selected, ensuring that deployment decisions remained tied to a commercial objective.

The deployment followed that direction. AI-enabled image capture was installed on 100,000 freezer cabinets to monitor stock levels in real time, trigger replenishment, and provide field teams with data for execution and promotion planning. The company set a target of 350,000 AI-enabled cabinets by 2025, scaling the system based on its impact on availability rather than ease of implementation.

"To win those impulse sales, our brands need to be available at the right place and the right time."

— Sarosh Hussain, Head of Digital Selling Systems, Unilever Ice Cream

This made customer availability the central metric for AI deployment, ensuring that scaling decisions were tied directly to revenue impact rather than internal adoption.

AI-enabled freezers increased retail orders and sales by up to 30% in key markets. Gross margin expanded 280 basis points to 45.0%, the highest in a decade, under the Growth Action Plan.

Unilever’s 2024 performance data reflects how this outward AI profitability focus translated into revenue outcomes. This shows the impact of keeping AI deployment aligned with the direction of customer availability at the point of sale.

Epic Solved AI ROI by Defining Outcomes Before Deployment

Most healthcare AI deployments reach the board as efficiency stories. Productivity improves, operational metrics move, and additional investment is requested. However, the connection to patient outcomes or revenue performance often remains unclear and undefined.

Epic Systems, the EHR platform used by 42% of acute care hospitals in the United States and serving 325 million patients worldwide, identified this as a definition problem rather than a technology problem. Time saved on charting remained at the clinician level. As a result, it appeared as efficiency, but didn’t translate into patient impact or revenue cycle performance.

The AI ROI gains existed; however, the outcomes had never been clearly defined. To fix this, Epic grounded its AI suite in three clear outcome areas before any AI cost-saving tools were launched, covering patient clinical outcomes, health systems' revenue cycle performance, and clinician experience. Each application was built with a defined outcome in mind from the outset.

Art, Epic’s clinician AI, was built to free up time for direct patient care, with voice-assisted documentation capturing notes during meetings so the focus stays on time returned to patients, not transcription speed.

On the financial side, Penny, its revenue cycle AI, targets fewer claim denials and faster prior authorizations, tying directly to health system revenue. Emmie, the patient-facing AI, is designed to reduce system workload while improving patient access and satisfaction.

This system-level integration ensures that each AI capability is tied to a defined outcome, allowing performance to be measured across both individual workflows and the broader enterprise.

"It's saving me time, which is great, but it's also saving my sanity and allowing me to give more attention directly to my patients."

— Kate Ledford, MD, Group Health Cooperative of South Central Wisconsin

This made clinician time a defined outcome rather than a byproduct of efficiency, linking productivity gains directly to patient care. For instance, at Summit Health, Penny reduced the time to submit medication prior authorizations by 42%, with 92% of AI-generated responses accepted without edits.

The outcome clearly shows that the measurement of AI ROI wasn’t defined later. It was built in from the start because the goal was defined before the tool. With that clarity, the baseline is already set, AI ROI measurement becomes straightforward, and the board gets a number it can actually evaluate.

Bank of America Built AI Systems With Attribution Designed In From Day One

At Bank of America, every AI model is attributed to an outcome before it goes live. With more than $4 billion committed annually to technology and innovation and over 270 AI and machine learning models in operation, the company had no shortage of AI activity. The challenge was making each model’s impact traceable.

The solution was structural. Before any initiative moves forward, it starts with what the customer needs. Each use case is tested against 16 parameters, with cost, value, and risk evaluated before deployment.

That sequencing shaped every major deployment with attribution built in from the start. Erica, the customer-facing virtual assistant, was scoped to reduce navigation friction across a complex mobile app, with a clear measurement baseline defined at launch.

For employees, it reduced the IT service desk load, with a directly attributable cost line attached. AI coding tools deployed across 18,000 developers were tied to a defined reinvestment outcome, ensuring productivity gains could be attributed to new growth programs.

"We've already seen 20% productivity boosts coming out of those parts of the lifecycle, which we are now reinvesting next year into new growth programs."

— Hari Gopalkrishnan, CITO, Bank of America

The results were attributable because they were designed to be. Fraud loss rates were cut in half through more than 50 AI-enabled detection models, each tied to defined outcomes that made the impact isolable. Service call volume dropped by 60%, traceable to specific tools linked to specific customer problems.

AI ROI at Bank of America didn’t need to be proven later. It was attributable by design, with every model tied to a clear business outcome from day one.

AI ROI Fails Without Outcome Definition and a Measurable Baseline

When Genpact’s CEO was asked to explain AI's impact on a live investor call, the answer pointed to customer value. Every AI engagement was designed around a measurable customer outcome from the start, not after deployment.

That strategy is what separates AI programs that reach the P&L. The outcome is defined first. The initiative is built around it. Measurement follows because the baseline already exists.

Till now, most enterprises have followed a different path. AI improved efficiency, reduced costs, and accelerated internal processes. These systems worked, but their impact remained operational. It didn’t translate into revenue in a way the board could measure.

That’s why more than 40% of agentic AI projects are projected to be canceled by 2027 due to unclear business value and weak risk controls. The issue is not adoption. It is direction.

.avif)

Frequent Asked Questions

What separates enterprises that achieve AI profitability from those that don't?

AI profitability follows a clear pattern across the enterprises that have achieved it. The customer outcome was defined before the technology was selected. Genpact built this into every client engagement from the start, and its Advanced Technology Solutions revenue reached $1.204 billion, up 17% year over year. Unilever anchored AI deployment to product availability at the point of sale, and gross margin expanded 280 basis points to 45.0%, the highest in a decade. The enterprises still waiting on AI profitability are those that optimized internal operations first and expected customer value to follow. It rarely does.

Why are AI cost savings no longer enough to protect the budget?

AI cost savings were the primary justification for enterprise AI investment through 2024 and 2025, and boards approved budgets on that basis. By 2026, that argument started to lose ground. Among 830 global IT decision makers surveyed by Futurum Group, productivity declined by 5.8 percentage points as the leading measure of AI success. Boards are no longer asking how much the program saved. They are asking where those savings show up on the income statement and whether AI is also driving revenue growth.

Why is agentic AI ROI so hard to prove?

Agentic AI ROI is hard to prove because the outcome is mostly never defined before deployment. More than 40% of agentic AI projects are projected to be canceled by 2027 due to unclear business value and weak risk controls. Agentic systems are more autonomous and more complex, which makes it harder to trace what they actually contributed when no baseline was set. The enterprises most likely to prove agentic AI ROI are those that follow the same approach used at Unilever and Epic. Define the customer outcome first. Build the measurement framework before the system goes live.

Can an AI ROI calculator tell you if your investment is working?

An AI ROI calculator can put a number on efficiency gains like hours saved, tasks automated, and headcount held steady. What it cannot do is close the gap between operational output and financial impact on its own. The 56% of CEOs who reported no measurable financial benefit from AI in 2026 were not short on productivity data. They were short on a defined customer outcome and a revenue baseline to measure against. An AI ROI calculator becomes useful only after those two inputs exist. Without them, it measures the wrong thing accurately.

How do you evaluate ROI on enterprise AI investments before a board review?

Evaluating ROI on enterprise AI investments comes down to three things that need to be defined before deployment begins. First, what customer outcome will the initiative create? Second, which income statement line will it affect? Third, what baseline exists to separate AI's contribution from other business changes happening at the same time? Bank of America tied every one of its 270-plus AI models to a defined outcome before launch, which is why its results were easy to report and defend at the board level. Without those three elements in place, a program is measuring AI activity rather than AI impact.

What is AI ROI, and why is it hard to measure?

AI ROI is the financial return an organization generates from its AI investments, measured against a defined cost and outcome baseline. Most enterprises struggle to measure it because AI programs were built to track operational output rather than financial outcomes. In 2026, 56% of CEOs reported no measurable financial benefit from AI, and only 12% said AI had delivered both cost and revenue impact. The gap is a measurement design problem, not a technology problem.

.svg)

_0000_Layer-2.png)